Conveying Emotions Through Avatars

Avatars are everywhere. These graphical representations are used in gaming, social networking, and even marketing to characterise humans in a virtual environment.

<iframe width="100%" height="450" src="https://www.youtube.com/embed/sbZX4MIzL4c" title="YouTube video player" frameborder="0" allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture" allowfullscreen></iframe>

In late 2018, Vodafone’s latest employee, Kiri, was introduced to the public. She is an AI simulation developed as a digital assistant using UneeQ’s latest technologies. She is incredibly realistic.

The growing interest in and adoption of online services has propelled the market demand for intelligent virtual assistants - it is increasing with a value of USD 3.7 billion in 2019 and is expected to grow continually over the years.

The look of avatars span from objects like Clippy in MS Word, to animals, to humanoids. Whether they look unreal or realistic enough, in most cases, they do not come with convincing behaviour models in their design and structure.

“When dealing with people, remember you are not dealing with creatures of logic, but creatures of emotion.” Dale Carnegie

Emotions are inherent to humans, as they express inner workings of the brain. Avatars aren’t wired to feel such and respond in a subjective manner, so conveying emotions will take a lot of technological augmentation to deliver human-like interactions.

So how do avatars convey emotions?

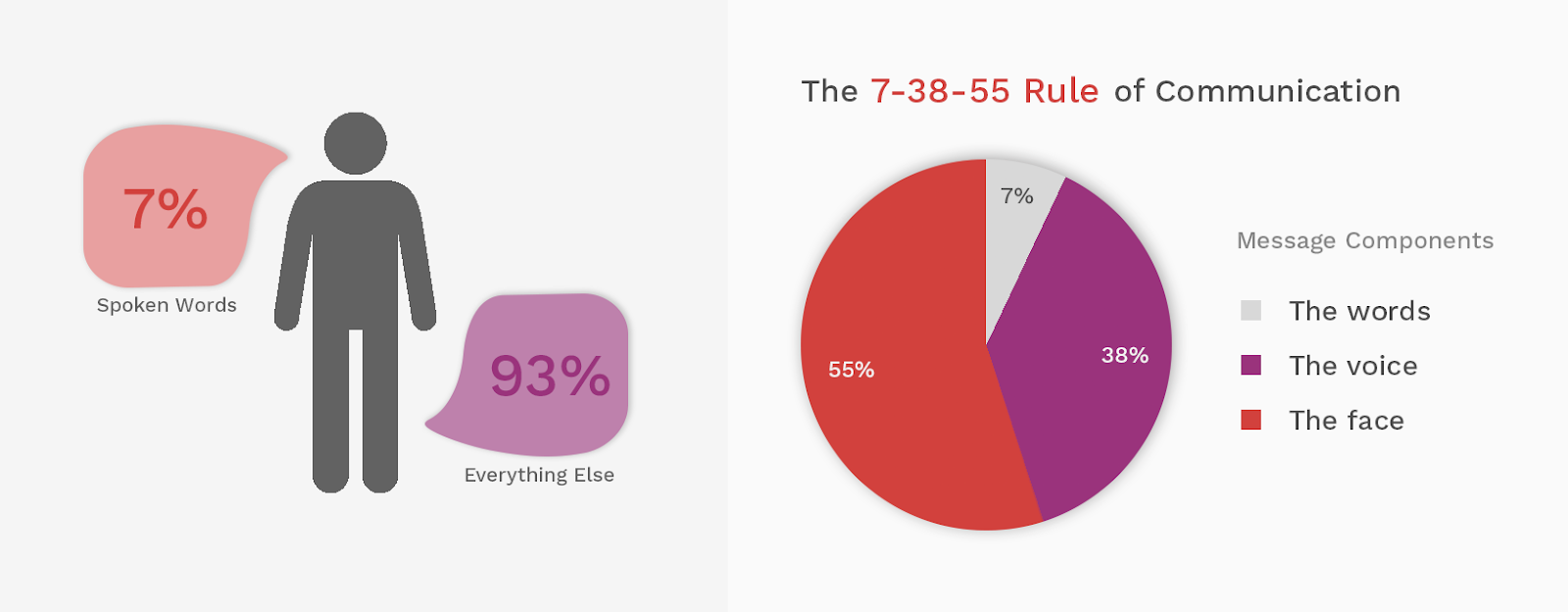

The most widely suggested model of behavior says that up to 93% of communication is nonverbal.

Body language and gestures

These nonverbal cues speak volumes and convey emotions much more than verbal ones. Posture, along with different positions of the limbs and movements of body parts, correspond to different emotions.

We've heard about the power stance and “Wonder Woman pose” - these are postures that convey confidence. Rubbing the chin implies introspection, a fist pump expresses feelings of victory, a thumbs up confirms approval, and so on.

Facial expressions

Facial expressions are the most universal form of body language — it is the most important visual channel to perceive and express human emotions. Facial motion capture technologies are now used to emulate human facial expressions and inject them in the design and structure of avatars.

Character design

The character design of an avatar is important to define its personality, how trustworthy and attractive it is to the users. Character design also guides the motion capture actor to portray the avatar’s facial expressions and body language accurately.

Animation

Animation communicates the character's personality and how it behaves in different situations as well as how it interacts with users. There are principles of animation like squash and stretch or exaggeration that shape the avatars’ movements to make its personality solid and relatable.

.gif)

Mica, said to be the most realistic AI avatar assistant, has human-like facial expressions and features to make emotional connections.

This breakthrough is a concrete example that avatars, though born through computers, can convey emotions.

We have used a reiteration of human avatars — 3D human modelling, to teach our own AI models. To do this, we created thousands of “fake” 3D human faces that are trained into the AI model, teaching it to recognise “real” human behaviours.

The result is Viana™, a computer vision software that provides businesses with anonymised data of their in-store customer demographics and behaviours.

Originally published as Conveying Emotions Through Avatars