Navigating Retail & Hospitality Analytics with Confidence

Why Viana's Privacy-First Approach is Not Facial Recognition

The Australian Privacy Commissioner's recent determination regarding Australian retail giant Kmart's use of in-store technology has rightly put a spotlight on how customer data is handled in retail spaces. We see this as a great opportunity to help clarify the crucial differences between technologies.

To be clear from the outset: meldCX's Viana™ platform is not Facial Recognition Technology (FRT).

Viana is built from the ground up on a 'Privacy by Design' foundation. Unlike FRT, which captures sensitive biometric data to identify who a person is, Viana is designed to understand anonymous behaviour—how many people are in a space and where they go. We achieve this through immediate, on-device anonymisation, a process that ensures no personal or biometric data is ever captured, stored, or transmitted, keeping our solution aligned with the principles of the Australian Privacy Act and GDPR. Our commitment to these high standards is independently validated by our TRUSTe Certified Privacy seal.

The rest of this article takes a deep dive into how our technology works, how it aligns with global privacy regulations, and why our approach offers a powerful, ethical, and future-proof solution for modern retail and hospitality settings.

Demystifying the Technology: What is Facial Recognition (FRT)?

At its core, Facial Recognition Technology (FRT) is used to identify or verify a specific individual. It works by capturing a person's unique facial features to create a biometric template—a digital representation of their face, often called a "faceprint."

This is a critical distinction from a regulatory perspective.

- Under the Australian Privacy Act 1988, these biometric templates are classified as ‘sensitive information’, affording them a much higher level of privacy protection. The Act also clarifies that properly de-identified and anonymous data is not considered identifiable information, which is the foundation of our approach.

- Similarly, the European Union's General Data Protection Regulation (GDPR) considers biometric data used for the purpose of unique identification to be 'special category data', demanding the most stringent safeguards.

As the Australian Privacy Commissioner's recent determinations have shown, collecting this sensitive data is the central compliance challenge. It requires organisations to clear the very high bar of obtaining explicit, informed consent from every individual, or proving that a narrow legal exception applies.

The Viana Difference: Vision Analytics, Not Biometric Identification

Let us be unequivocal: Viana is a vision analytics platform, not a facial recognition system. Our technology is fundamentally different in both its purpose and its design.

Viana's goal is not to identify who a person is, but to understand anonymous behavioural patterns—how many people are in a space, where they move, and how long they engage with certain areas. This distinction is not a matter of semantics; it is the result of our commitment to "Privacy by Design and by Default" a core principle of modern data protection regulations like GDPR. Viana was architected from its inception to avoid the collection of sensitive personal information.

How We Do It: The Power of Anonymous, Temporary Object IDs

To build trust, we believe in transparency. Viana's process is designed to turn a live visual feed into anonymous, actionable insights without ever storing images or video. All processing occurs in real-time, at the device level.

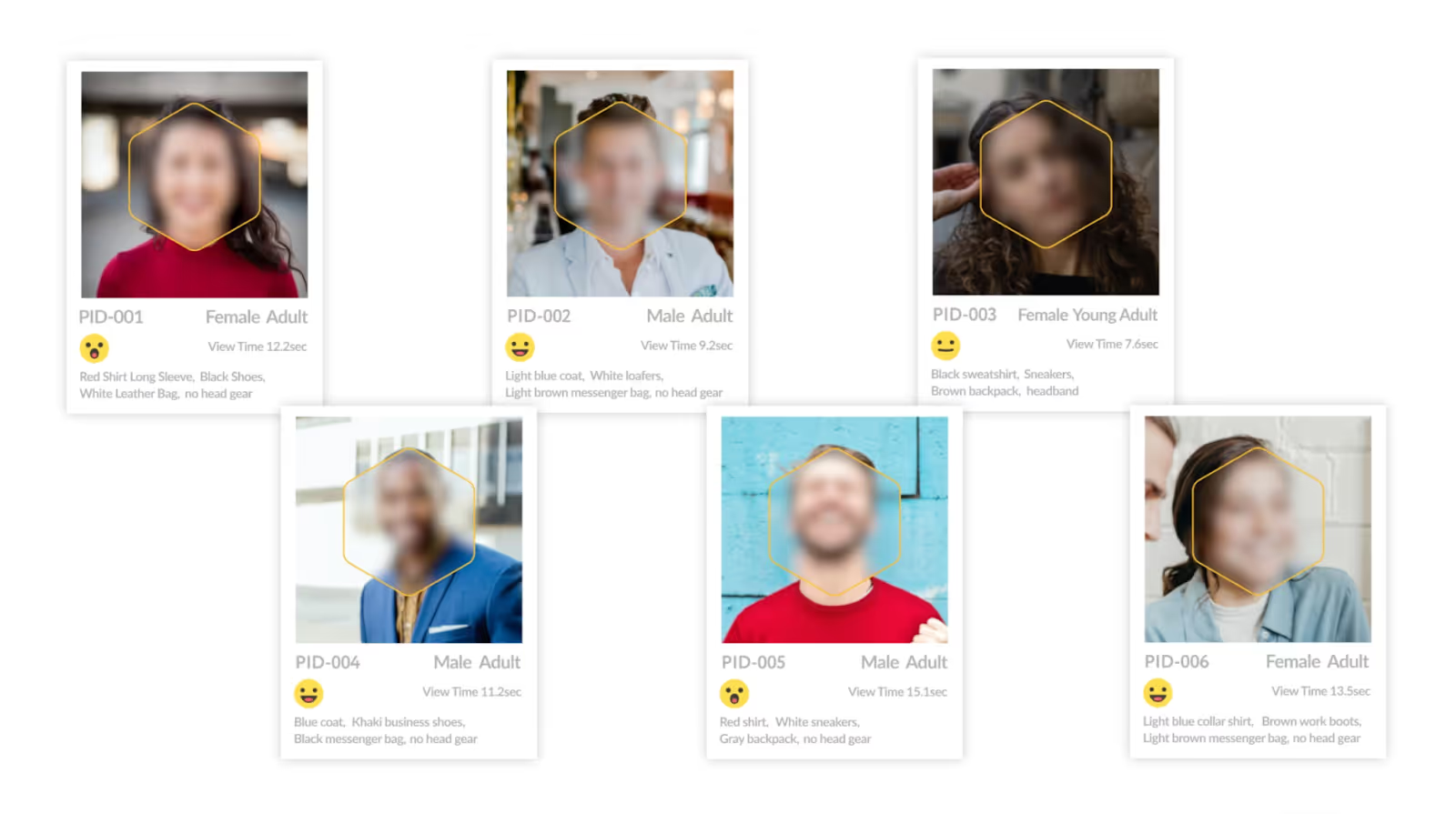

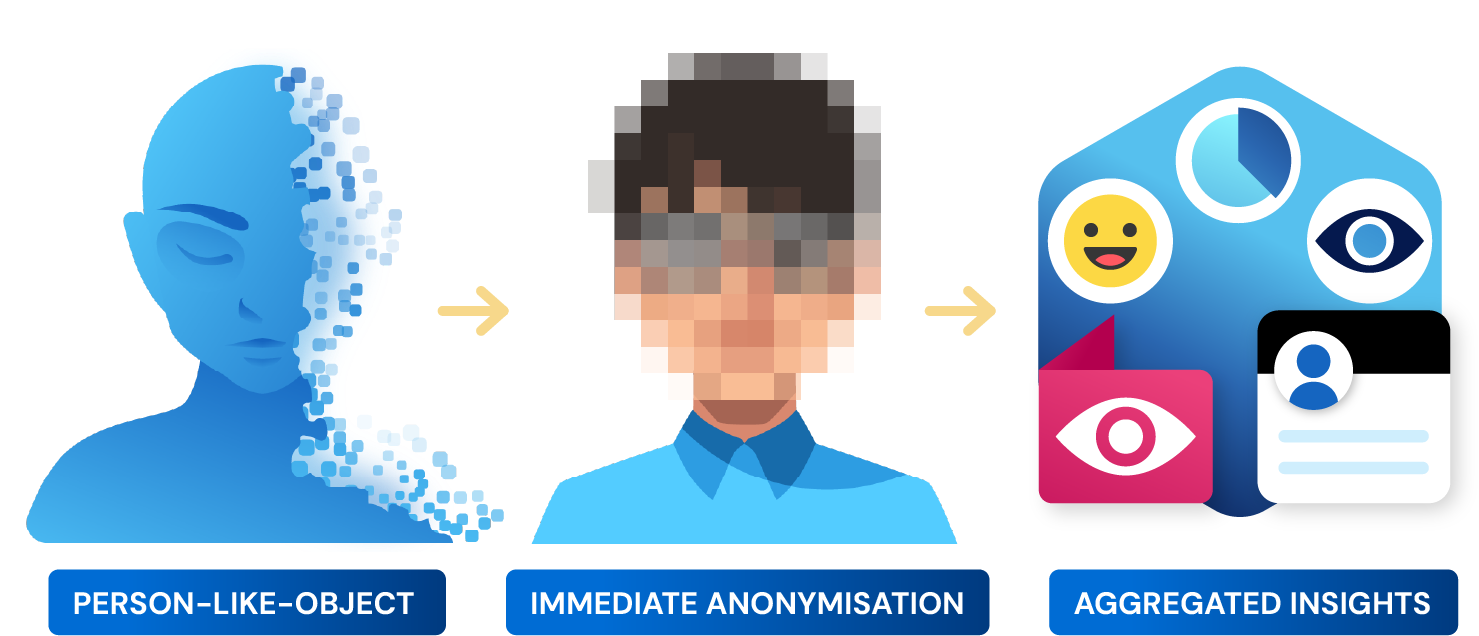

- Detection of a "Person Like Object" (PLO): The system processes a live camera stream and detects a "Person Like Object" (PLO) entering the camera's field of view.

- Generation of a Temporary, Non-Linkable ID: Upon detection, the system generates a temporary, unique Object ID used solely for tracking the PLO within that single, continuous view.

- Immediate & Irrevocable Anonymisation: Simultaneously, advanced blurring and pixelation techniques are applied in real-time. This ensures that no human faces, biometric templates, or personally identifiable information (PII) are ever captured, created, or stored.

- Permanent Discard of the ID: Once the PLO exits the camera's view, tracking stops, and the temporary Object ID is permanently discarded. If the same PLO re-enters the view, it is treated as a new object and assigned a completely new, unrelated Object ID. These IDs are transient and cannot be linked across sessions or cameras.

- Actionable, Aggregated Insights: The temporary tracking of these anonymous PLOs is what allows us to generate valuable, aggregated data—such as footfall counts, heat maps, and dwell times—without ever being able to link it back to a real-world identity.

Our Demonstrable Commitment to Privacy and Ethical AI

A commitment to privacy must be demonstrable, not just declared. At meldCX, our practices are validated against global standards and designed to align with key regulatory principles.

Governance, Assurance, and our TRUSTe Certification

Our privacy governance is verified by leading third-party assurance programmes. meldCX has demonstrated that our privacy policies and practices meet the rigorous TRUSTe Enterprise Privacy & Data Governance Practices Assessment Criteria, earning us the TRUSTe Certified Privacy seal. This provides our customers with independent, ongoing assurance that our privacy framework is robust and meets globally recognised standards.

Necessity and Proportionality

A key finding in the Privacy Commissioner's recent determinations was that Kmart’s use of FRT was a disproportionate invasion of privacy for the stated goal. Viana, in contrast, is a proportionate tool. It achieves the business objective of understanding customer behaviour using the least privacy-intrusive means possible: fully anonymised analytics.

Ethical AI and Avoiding Bias

A significant risk in any AI system is algorithmic bias. We mitigate this from the very beginning. Viana’s AI models are not trained using photographs or videos of real people. Instead, we use our proprietary 3D engine to create "synth" or synthetic data. This allows us to generate millions of diverse scenarios to train our models effectively, removing bias and completely protecting individual privacy from the ground up.

Forward-Looking Compliance (EU AI Act)

We design our technology not just for today's regulations, but for tomorrow's. The recently enacted EU AI Act will heavily regulate 'high-risk' AI systems, including most uses of real-time biometric identification in public spaces. By focusing on anonymised analytics, Viana is intentionally designed to operate outside of these high-risk categories, providing our customers with a powerful, future-proof solution.

Conclusion: Gaining Insight Without Compromising Trust

The regulatory landscape is sending a clear signal: the use of technologies that capture sensitive biometric information faces intense and justified scrutiny.

However, the need for retailers to understand their physical environments to improve service, layout, and efficiency remains. Viana proves that these two realities are not mutually exclusive. It is entirely possible to gain powerful, data-driven insights without compromising customer trust or breaching privacy regulations.

Viana offers a compliant, ethical, and effective alternative that delivers profound business value while fundamentally respecting individual privacy.